Scroll through LinkedIn on any given morning and most of the posts read the same way. The vocabulary blurs together and the writing sounds like it could have come from anyone. Originality.AI tracked 8,795 LinkedIn posts and found that over half of long-form content is now likely AI-generated.⁴ The platform built for demonstrating expertise has become a wall of interchangeable text…

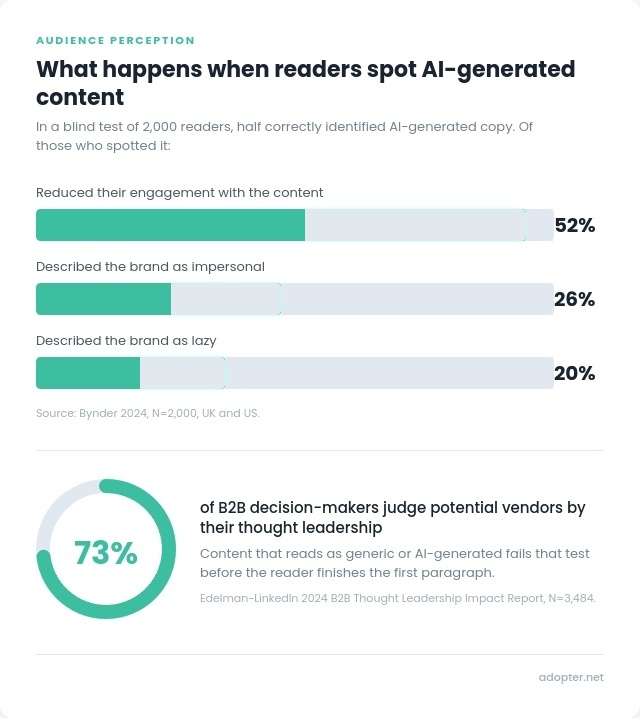

Readers have noticed too. In a controlled study of 2,000 people, Bynder found that half could correctly identify AI-generated copy. Of those who spotted it, 52% disengaged, 26% described the brand as impersonal, and 20% called it lazy.²

The patterns behind that reaction are well-documented. Researchers at the University of Tübingen tracked vocabulary across 15 million scientific abstracts and found 454 words spiking in usage since ChatGPT launched. The word “delves” appeared at 28 times its expected frequency.¹ The same patterns show up in marketing content.

The Kobak study identified 379 excess style words in 2024, with 66% verbs and 14% adjectives.¹ None of these words are problems on their own. The signal is frequency and clustering. A sentence like “This comprehensive framework leverages innovative approaches to navigate the evolving landscape” contains five of them. Any one would pass without comment. Five in sixteen words becomes a fingerprint.

Verbs: delve, underscore, showcase, leverage, utilize, foster, navigate, enhance, streamline, harness, craft, spearhead, bolster, unpack

Adjectives: crucial, pivotal, comprehensive, intricate, meticulous, robust, nuanced, innovative, groundbreaking, seamless, vibrant, dynamic, multifaceted

Nouns and phrases: landscape, tapestry, realm, paradigm, synergy, ecosystem, framework, cornerstone, catalyst

Filler: it’s worth noting, importantly, notably, furthermore, in today’s rapidly evolving..., at the end of the day

.jpg)

Swapping out flagged words is the easy part. These structural patterns sit underneath the vocabulary and are harder to spot in your own writing.

Uniform sentence length. Most writers naturally vary their sentence length without thinking about it. A quick thought gets a short sentence. A complex point runs longer. AI text doesn’t do this. It stays in a narrow band, sentence after sentence, with little variation.

“It’s not X, it’s Y” contrasts. The single most common AI rhetorical move. “The problem isn’t visibility. It’s credibility.”

Setup sentences. “Here’s where it gets interesting.” “That has a straightforward solution.” These exist to announce a point rather than deliver one.

Formulaic paragraphs. Topic sentence, supporting evidence, summary. Identical structure in every section, every time.

Rule of three. Three parallel phrases, examples, or adjectives grouped for rhetorical effect. It's a legitimate writing technique, but AI defaults to it so consistently that frequent use across a piece reads as generated.

Lists disguised as prose. Four items in a comma-separated list crammed into a single sentence. Enumeration pretending to be narrative.

Motivational wrap-ups. Final sentences that add no information. “At the end of the day, it’s about building real connections.” If the last line of a section could be deleted without losing anything, it probably should be.

Em dash overuse. Most writers don’t have an em dash on their keyboard and would naturally reach for a hyphen or a full stop. Em dashes are valid punctuation, but they’ve become so strongly associated with AI output that even one or two in a post can be enough for readers to assume the whole thing was generated.

Perfect grammar throughout. No variation from standard rules anywhere in the piece. Human writers break rules on purpose and vary their level of polish across a draft.

No genuine specifics. Generic examples, no named projects or regulations, no numbers from actual work. Content that could describe any company in any sector.

Register breaks. The tone shifts from conversational to academic to salesy within the same piece, as though different sections were written separately and stitched together.

.jpg)

A formal writing style doesn't make someone sound like AI. Writers trained in academic English, non-native speakers taught to write with more structure, or anyone who prefers a formal register can all produce content that triggers one or two items on this list. Any single tell in isolation could show up in perfectly good human writing. AI models learned to write from well-structured, grammatically correct, carefully edited text, so there's a natural overlap between polished human writing and AI output.

The tell is when they stack up. When a post has em dashes, three words from the overused list, uniform sentence length, and a motivational wrap-up in the closing line, the pattern becomes hard to miss. Well-written content has personality, specific knowledge, and rough edges that come from a real person working in a real sector. AI-generated content has polish but no fingerprints. When enough tells accumulate in one piece, readers register the difference.

Most companies using AI for content are just trying to keep up. There’s always more to publish and never enough time to write it all. The problem is that light-touch AI use produces content that looks like everyone else’s light-touch AI use.

73% of B2B decision-makers say they judge potential vendors by their thought leadership.³ Content that reads as generic or templated fails that test before the reader finishes the first paragraph. The filter is whether your writing sounds like someone who works in your sector produced it. If you could swap in any industry name and the sentences would still make sense, that content reads as AI-generated.

Specificity also makes content more interesting to read. Across 642 LinkedIn posts we tracked over 12 months, posts built around named regulations, quantified outcomes, and concrete project detail outperformed generic posts by 23-37% on engagement, reaching more of the specialist buyers already researching companies in their sector.

Word-level tells include overuse of verbs like delve, showcase, and leverage, adjectives like crucial and comprehensive, and filler phrases like “it’s worth noting.” Structural tells include uniform sentence length, formulaic paragraph construction, and “it’s not X, it’s Y” contrasts. No single tell is proof of anything - formal writers and non-native English speakers can trigger individual items. The signal is when several stack up in one piece. A 2025 Science Advances study identified 379 excess style words across 15 million academic abstracts.¹

Yes. Bynder’s 2024 study (N=2,000, UK and US) found 50% of readers correctly identified AI-generated copy in a blind test. When they spotted it, over half disengaged and a quarter described the brand as impersonal.²

It removes the obvious ones. Swapping “delve” for a different verb takes seconds. Structural patterns are harder. Uniform sentence rhythm, formulaic paragraphs, the absence of real specifics - those run through the architecture of the piece. Light editing cleans the surface without changing how the content is built. Substantive rewriting works. Find-and-replace doesn’t.

Because buyers use content to judge whether a company has genuine expertise. Edelman and LinkedIn’s 2024 research (N=3,484 global executives) found 73% of decision-makers consider thought leadership a more trustworthy basis for evaluating vendors than marketing materials.³ Content that reads as generic or AI-generated suggests the company didn’t have enough to say to write it themselves.

LinkedIn for B2B in 2026: Why Technical Companies Now Have an Advantage

¹ Kobak et al. (2025), “Delving into LLM-assisted writing in biomedical publications through excess vocabulary,” Science Advances 11(27). 15 million PubMed abstracts, 2010-2024.

² Bynder (2024), AI vs Human Content study. N=2,000, UK and US.

³ Edelman-LinkedIn (2024), B2B Thought Leadership Impact Report. N=3,484 global business executives.

⁴ Originality.AI (2024), AI Content on LinkedIn study. 8,795 posts analysed.

Matt Jaworski is co-founder of Adopter, Europe's first marketing agency to specialise in climate tech and adaptation. He works with clients across five continents, spanning biotech, construction, finance, and energy. His focus sits across web design and development, brand strategy and messaging, and GEO/SEO - helping climate tech companies build a credible, findable presence online. Matt also mentors at IndieBio, Conception X, and C13.